In video streaming systems, encoding and decoding are two core processing steps that directly determine video data storage efficiency, transmission bandwidth usage, and terminal playback quality.

Encoding refers to the process of compressing raw video frames (typically pixel matrices in YUV or RGB format) into a compact data stream using specific algorithms. Its primary goal is to minimize data size while maintaining acceptable visual quality.

The encoder outputs a compressed “bitstream,” which is usually encapsulated in a container format (e.g., MP4, TS, FLV) or transmitted directly as an elementary stream.

Decoding is the inverse process of encoding, restoring the compressed bitstream into displayable video frames. Decoders must strictly parse the bitstream structure according to encoding standards. Decoding must ensure “standard consistency,” meaning any compliant decoder should produce identical results for the same bitstream—the foundation of interoperability.

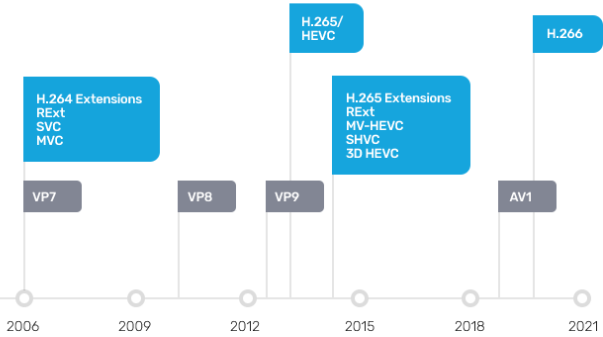

H.264 (AVC, Advanced Video Coding) remains the most widely adopted video coding standard. H.265, finalized in 2013, doubles compression efficiency over H.264 while supporting 4K/8K ultra-high definition and high dynamic range (HDR) content.

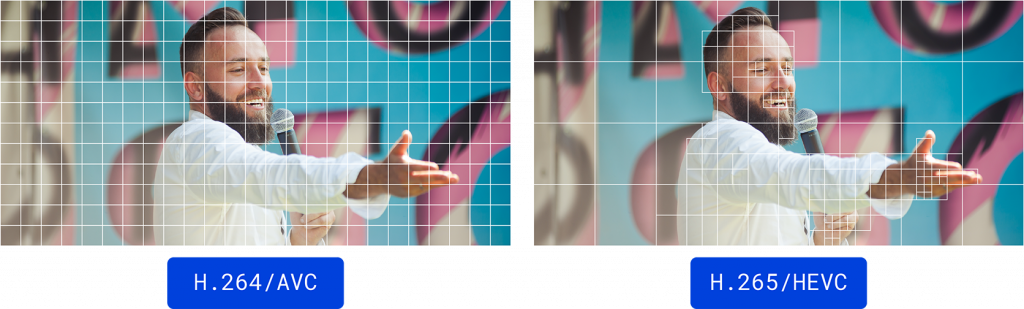

Compression Efficiency: At equivalent PSNR, H.265 achieves 40%-50% lower bitrate than H.264 on average; at identical bitrates, it delivers significantly improved subjective quality.

However, while H.265 offers superior compression efficiency, it demands substantial computational resources and places higher requirements on terminal hardware decoding capabilities.

So, what hardware and software capabilities determine how many video streams a video server can process?

The concurrent processing capacity of a video streaming server (also known as a media server)—i.e., “how many video streams it can handle simultaneously”—is not a fixed value. It is a systemic metric determined by multiple factors including hardware resource configuration, software architecture design, encoding format, resolution, frame rate, transmission protocol, and service type (e.g., transcoding, distribution, recording, AI analysis).

1. The CPU serves as the core computational unit of a video server. Processing video streams using the CPU is referred to as software decoding. For example, a 32-core/64-thread CPU can support approximately 80–120 streams when performing pure H.264 1080p decoding at 25 fps. However, enabling H.265 decoding for 4K streams at 30 fps may reduce support to only 20 streams.

2. GPUs offload video encoding/decoding through hardware acceleration, significantly boosting concurrent processing capacity. Decoding using a GPU is called hardware decoding. GPU acceleration requires software support (e.g., FFmpeg), and the number of channels is limited by GPU memory capacity (each 1080p channel requires approximately 50–100MB of GPU memory).