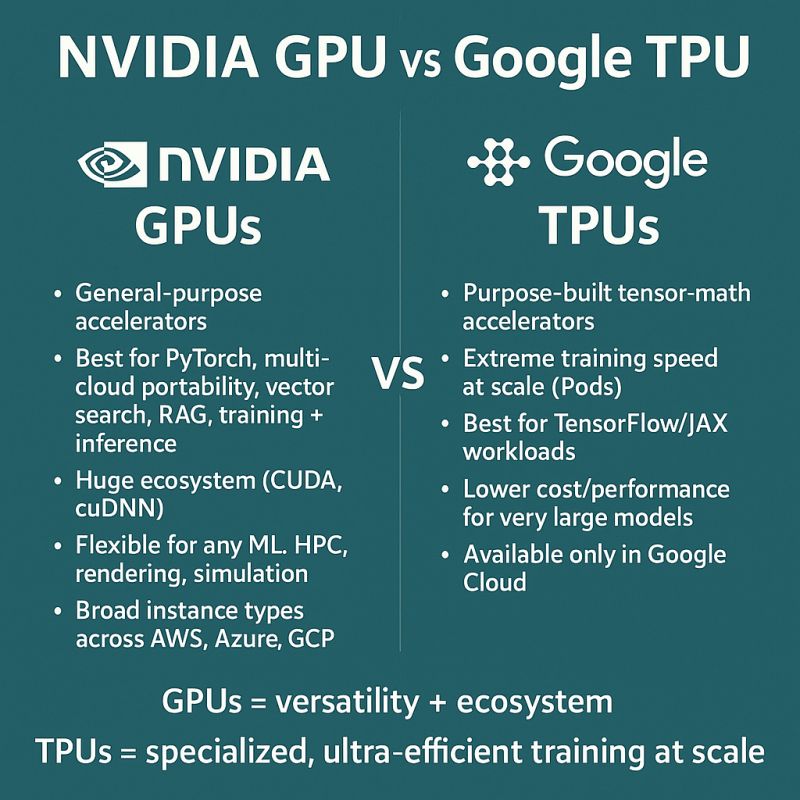

Reports indicate that Google (GOOGLE.US) is intensifying its competition with NVIDIA (NVDA.US) in the AI chip race, while Meta (META.US) emerges as a potential multi-billion-dollar client.

For years, Google has restricted its custom Tensor Processing Units (TPUs) to its own cloud data centers, leasing them to companies running large-scale AI workloads. Now, however, the tech giant is promoting these chips for deployment in customers 'own data centers—a significant strategic shift. Meta is among the clients. Reports indicate Meta is considering a multi-billion-dollar investment to integrate Google's TPUs into its data centers starting in 2027, while also planning to lease TPU from Google Cloud as early as next year. Currently, Meta primarily relies on NVIDIA's GPUs for its AI infrastructure... This marks the beginning of the rivalry between Google's TPUs and NVIDIA's GPUs. So, what exactly are TPU and GPU, and how do they differ?

Introduction

With the explosive growth of artificial intelligence and machine learning applications, the demand for computing hardware has been escalating. As two mainstream accelerators, Graphics Processing Units (GPUs) and Tensor Processing Units (TPUs) play a pivotal role in deep learning. This article conducts an in-depth technical comparison between TPU and GPU from the perspective of hardware underlying principles, revealing their fundamental differences in design philosophy, architectural implementation, and applicable scenarios.

I. Core Design Philosophy and Origin

1. GPU: For flexible parallel processing

The GPU originated from accelerating computer graphics rendering. The essence of graphics rendering lies in parallel processing of massive, independent vertices and pixels (Single Instruction Multiple Data Stream, SIMD, and its broader form Single Instruction Multiple Thread, SIMT). Therefore, the core design of GPUs focuses on providing a vast number of relatively universal arithmetic logic units (ALUs), equipped with complex control logic and cache hierarchies to efficiently manage and schedule thousands of parallel threads. Its goal is to become a "general-purpose parallel processor" that excels in parallel processing, though its computing units themselves are not rigidly fixed for specific operations.

2. TPU: Domain-specific matrix computation

TPU was born from Google's realization that its deep learning inference tasks in data centers were not optimized for energy efficiency or absolute performance when using CPUs and GPUs. The core of neural network computation lies in large-scale matrix multiplication and accumulation (Multiply-Accumulate, MAC), followed by activation functions. Thus, TPU's design philosophy sacrifices versatility by dedicating nearly all silicon area and power consumption to executing matrix multiplication, achieving peak efficiency and performance.

II. The underlying differences in hardware architecture

1. Computing Core: Arithmetic Logic Unit (ALU) vs. Multiplication-Extraction Unit (MXU)

GPU: Large-scale scalar-vector ALU

The computing core of a GPU is the Stream Processor (SP), which is essentially a scalar Arithmetic Logic Unit (ALU). Multiple SPs form a Stream Multiprocessor (SM, known by different names in NVIDIA's architecture). While modern GPU ALUs support Fused Multiply-Add (FMA) operations, their basic workflow involves fetching operands from registers or cache, processing them in the ALU, and writing the results back. To handle the complex memory access patterns in graphics and general computing, GPUs are equipped with multi-level caches (L1, L2) and shared memory, featuring intricate control logic.

TPU: systolic array

The TPU's core is a massive Matrix Multiply Unit (MXU) with a pulsating array architecture.

Working principle: In a pulsating array, the data processing unit (here the MAC unit) arranges itself in the grid in a rhythm-like pattern, synchronized with the heartbeat. Data flows in from the top and sides of the array, undergoes multiplication and addition operations at each node, and part of the results is transmitted along the data flow direction to the next node. Ultimately, the computational results exit from the other side of the array.

Key advantages:

· Maximum data reuse: Input data is reused by multiple computing units as it flows through the array, significantly reducing the number of external memory reads. This is the key to TPU's high energy efficiency.

· Reduced memory access requirements: As data circulates and reuses within the array, the absolute demand for high-bandwidth memory is significantly lower compared to the GPU's parallel ALU mode.

· Simplified control: The entire MXU is programmed as a unified unit with minimal instructions, whereas the GPU must coordinate thousands of threads to collaboratively perform the same large matrix multiplication.

2. Memory subsystem

GPU: Complex Hierarchical Cache

The GPU features a comprehensive memory hierarchy: registers → L1 cache/share memory → L2 cache → HBM/GDDR. This architecture is designed to support flexible general-purpose parallel computing. The shared memory enables low-latency communication between threads within a block, while the cache captures memory access patterns with high temporal and spatial locality.

TPU: Transient Memory Unit with Large Unified Buffer and High Bandwidth Memory

TPU's memory architecture is more direct and dedicated.

Unified Buffer: This massive on-chip memory module adjacent to the Matrix Unit (MXU) primarily functions as a temporary storage area for input/output matrices. Data is first preloaded from the High Bandwidth Memory (HBM), then the MXU executes computations by reading data from the unified buffer with exceptional bandwidth and minimal power consumption. This architecture directly optimizes the standard matrix operation workflow: "input retrieval → computation → output delivery".

Simplified caching: TPU's cache hierarchy is generally simpler than GPU's, as it doesn't need to handle complex random access patterns.

3. Data Types and Precision

GPU: Full Precision Support

Modern GPUs support a wide range of precision types, including FP64, FP32, FP16, BF16, INT8, INT4, and TF32. This versatility enables them to handle diverse workloads spanning scientific computing, graphics rendering, AI training, and inference.

TPU: Precision Optimized for AI

TPU has been dedicated to AI applications from the very beginning.

· From BF16/FP16 to BF16/FP32: TPUv2/v3 primarily employs BF16 for matrix multiplication and FP32 for accumulation, which has been proven to be one of the optimal trade-offs for training accuracy and efficiency in deep learning.

· supports low-precision inference: TPU also supports lower precision types like INT8 for inference, further boosting throughput and energy efficiency.

· Lack of high-precision computing: TPU typically lacks or offers inadequate support for FP64 high-performance computing, as it is not designed for such scenarios.

III. Software Stack and Programming Model

GPU: General Parallel Programming Model

NVIDIA's CUDA and OpenCL are standard GPU programming frameworks. Developers must structure problems into a hierarchical framework of grids, thread blocks, and threads, with explicit memory management (including global memory, shared memory, and registers). While highly flexible, these frameworks entail significant programming complexity.

TPU: Compiler-Centric Model

TPU programming heavily depends on compilers. Developers typically use high-level frameworks (e.g., TensorFlow, JAX, PyTorch), and compilers like XLA translate the model's computational graph into TPU instructions.

· -level graph optimization: The compiler performs comprehensive optimizations across the entire computational graph, including operator fusion, memory layout transformation, and data stream scheduling for pulsating arrays.

· 'Send and Forget': The program sends a large computational subgraph to the TPU, which executes it efficiently without the need to launch thousands of fine-grained threads like a GPU would.

IV. Performance and Energy Efficiency Comparison

metric | GPU | TPU |

peak computing power | Both achieve peak computational performance at their respective precision levels (FP32 for GPUs and BF16 for TPUs). However, the TPU's computational power derives more' purely' from its MXU, optimized for matrix multiplication. | When performing matrix multiplication and convolution operations in deep learning, TPU demonstrates significantly higher energy efficiency (performance per watt) than its CPU and GPU counterparts. |

energy efficiency | The performance is relatively low because its architecture includes redundant control logic and cache for general purpose. | It is highly efficient, as its architecture eliminates redundant components in the GPU for universal compatibility and minimizes energy consumption during data transfer. |

flexibility | High-end. Capable of running games, performing scientific simulations, processing graphics, and conducting AI training/inference. | Limited. It is almost exclusively dependent on neural network workloads, resulting in extremely low efficiency for non-matrix operations. |

applicable scene | General parallel computing, graphics rendering, scientific computing, AI training and inference | Large-scale Neural Network Training and Inference, Matrix-intensive Computing |

V. Summary: Intuitive Analogy of Architectural Differences

GPU: General Workers Team

A GPU resembles a construction crew of thousands of ordinary workers. Each worker (ALU) wields a hammer or saw (representing general-purpose computing power) to perform diverse tasks. Through a sophisticated command system (SM, Warp Scheduler) and a centralized tool repository (cache hierarchy), they collaborate to build a skyscraper (large-scale parallel computing). This system is highly adaptable, capable of handling various construction challenges.

TPU: Specialized Automated Factory

TPU operates like a highly customized automated assembly line. This pulsating array is specifically designed to produce a single product—matrix multiplication. Raw materials (input matrices) enter from one end of the line, pass through a series of meticulously engineered automated processing stations (MAC units), and exit as finished products (output matrices) from the other end. While highly efficient and specialized for this single task, it cannot be repurposed for other products.

Conclusion

The fundamental distinction between TPU and GPU lies in their architectural approaches: a general-purpose parallel computing engine versus a specialized matrix computation engine. While GPUs 'emulate' parallelism through massive general-purpose cores and sophisticated cache systems, TPU physically implements the most efficient matrix computations via its dedicated Pulsar arrays.

The choice between GPU and TPU depends on specific application scenarios: GPUs are more suitable for environments requiring high flexibility and diverse workloads, while TPUs offer distinct advantages in performance and energy efficiency for large-scale, batch neural network training and inference tasks.

With the continuous growth of AI computing demands, both architectures are evolving: GPUs are incorporating more AI-specific hardware (such as Tensor Cores), while TPUs are expanding their applicability. In the future, we may see more hybrid architectures emerge, combining the strengths of both to provide optimal solutions for diverse computing needs.